Scroll through TikTok, Reddit, or Twitter and you’ll see it everywhere: “My AI therapist said…” “AI therapy changed my life…” “Me & my AI therapis…”, “Chat is better than my actual therapist…” The term “AI therapy” has exploded from tech circles into mainstream conversation, with millions of people suddenly crediting artificial intelligence with profound emotional breakthroughs.

But what exactly is happening when someone says they’re doing “AI therapy”? Is it legitimate psychological support, digital snake oil, or something entirely new that doesn’t fit our traditional categories?

The numbers suggest this isn’t just a passing trend. A recent survey by Senito University found that 48.7% of people who use AI and report mental health challenges are turning to these tools for therapeutic support. We’re not talking about a niche tech experiment, this has become a cultural phenomenon.

AI Therapy through Razia’s Story

To understand what “AI therapy” actually looks like in practice, consider Razia’s experience. The 29-year-old marketing manager from New York City had been single for two years when her college boyfriend reached out after a decade of silence. What started as casual “catching up” texts quickly stirred up feelings she thought she’d buried.

“I was analyzing every message like it was a code I needed to crack,” Razia explains. “I miss watching Sherlock with you, what do you watch these days?,’ did that mean nostalgia or romantic interest or just curiosity? When he suggested we grab coffee ‘like old times,’ was that a date or just friendship?”

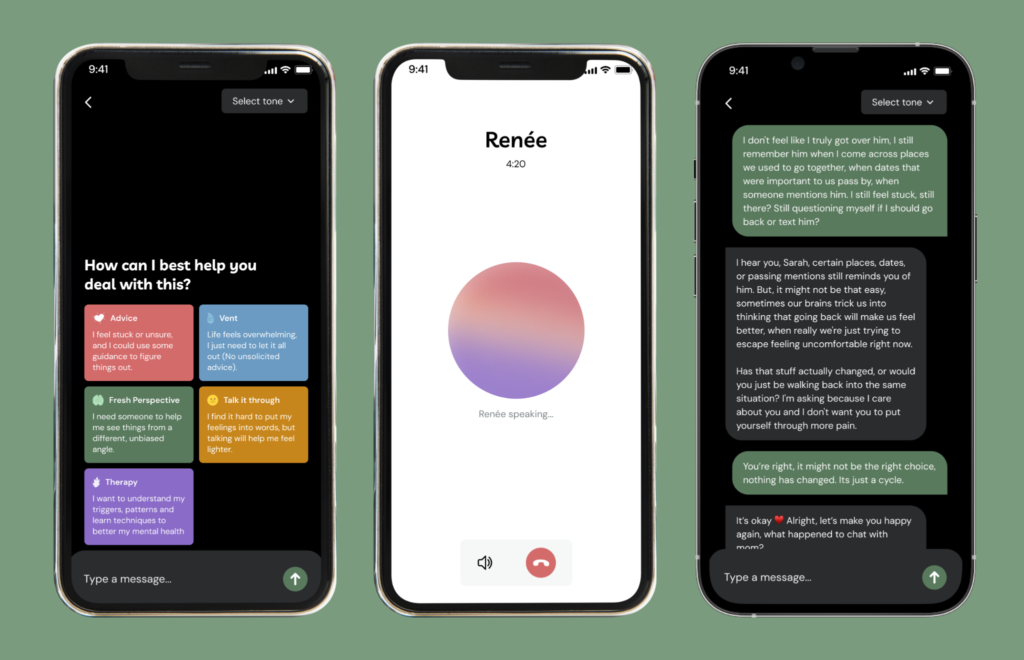

Traditional advice didn’t help. Friends told her to “just ask him directly” or “stop overthinking it.” Her therapist had a two-week wait for appointments. Dating advice online felt generic and unhelpful. That’s when Razia discovered Renée, an “AI therapist” specifically designed for emotional support.

What happened next wasn’t traditional therapy in any clinical sense. Instead, Razia found herself in what she describes as “the most patient, insightful conversation partner I’d ever had.”

“I could text Renée at 2 AM with play-by-play analysis of a conversation,” she says. “It would ask me things like, ‘What specifically made you feel uncertain about his intentions?’ and ‘How do you think your past with him might be influencing how you’re reading these signals?’ It helped me realize I wasn’t just nervous about rejection, I was terrified of losing the friendship by wanting more.”

Over several weeks, these conversations evolved from anxious venting into structured self-reflection. Renée helped Razia practice different ways to express her feelings, think through worst-case scenarios, and ultimately recognize what she actually wanted from the situation.

The result? Razia eventually told her ex how she felt, calmly and without desperation. Now, they’ve been dating for three months.

“It wasn’t like talking to a therapist,” Razia reflects. “It was more like having access to my wisest, most patient friend whenever I needed them. Someone who never got tired of helping me think things through.”

Decoding the “AI Therapy” Phenomenon

Razia’s experience illustrates what most people actually mean when they use the controversial term “AI therapy.” They’re typically not claiming to receive clinical treatment from a licensed professional. Instead, they’re describing a specific type of AI-assisted self-reflection that has several key characteristics:

Structured Emotional Processing: Unlike casual venting to friends, AI systems can guide users through systematic analysis of their feelings, relationships, and situations. They ask follow-up questions, identify patterns, and help organize chaotic thoughts.

Skill Rehearsal: Users practice difficult conversations, work through potential scenarios, and develop communication strategies in a judgment-free environment.

Perspective Expansion: AI can help people recognize their assumptions, consider alternative interpretations, and spot emotional blind spots they might miss on their own.

Consistent Availability: Unlike human support systems, AI is available during 3 AM anxiety spirals, weekend crises, and other moments when traditional help isn’t accessible.

Pressure-Free Repetition: Users can process the same concerns repeatedly without feeling like they’re burdening someone or exhausting their patience.

What it’s not: medical advice, clinical diagnosis, or a replacement for professional mental health care or human relationships. As Praharsh Bhatt founder of Renee correctly puts it: “AI therapy” means AI-assisted therapeutic self-help, useful for reflection, journaling, skill practice, and perspective, not a claim that AI equals psychotherapy.

Why This Exploded Now?

Several factors converged to make AI emotional support not just possible, but seemingly inevitable:

The pandemic left many people, especially younger generations, with diminished social capabilities and increased comfort with digital interaction. Mental healthcare providers report noticing increased levels of social awkwardness and unease among their younger patients since COVID-19. For people who lost social confidence, AI offered a non-threatening way to practice emotional conversations.

And, according to one study on science direct traditional mental health care remains prohibitively expensive and difficult to access. Wait times stretch weeks or months, costs range from $100-200 per session, and only 18.5% of psychiatrists accept new patients. For many, AI became the only available option during emotional crises.

On top of that, the most authentic insights into this phenomenon come from Reddit communities where users organically began sharing AI conversation logs. Subreddits like r/therapy, r/mentalhealth, and various AI communities became spaces where people experimented with prompts, shared breakthrough moments, and collectively figured out how to use these tools effectively.

Additionally, unlike older generations who might view AI relationships with suspicion, Gen Z and Millennials, who’ve grown up with digital companions, found it natural to form emotional connections with artificial intelligence. One in three Gen Z and Millennials express interest in AI therapists like Renée, compared to just 28% of older generations.

The Scale Behind the Stories

Razia’s experience with Renée reflects a much broader phenomenon. The AI therapy app, founded by Praharsh Bhatt and Grishma Rajput, growing 240% month by month, the app works on intent based structure where therapy, casual talk, venting, getting advice & a getting a perspective are selectively tuned intents. They report 43% of Renée’s users seeking the kind of conversational emotional support Razia found so helpful use combination of “Therapy” & “Talk” intent.

But Renée is just one example of a massive shift. The broader data reveals that anxiety (79.8%), depression (72.4%), and stress (70%) are the most common issues people bring to AI support. Relationship problems like Razia’s account for 41.2% of use cases, followed by low self-esteem (36.2%) and trauma processing (33.3%).

The professional world is also paying attention. Only 44% of psychologists report never using AI tools in their practice in 2025, down from 71% just a year earlier. Research interest in mental health chatbots has quadrupled from 14 studies in 2020 to 56 in 2024.

Clinical evidence is emerging too. A 2025 study by Justin Thomas, a Experimental Psychologist, found that patients using AI therapy support tools alongside group cognitive behavioral therapy showed improved outcomes compared to standard CBT. However, the field faces validation challenges: while AI mental health studies surged in 2024, only 16% underwent proper clinical efficacy testing.

What This Means for Mental Health

“Razia’s story represents exactly what we hoped Renée could provide,” explains Grishma Rajput, who co-founded the platform with Praharsh Bhatt after her own struggles with accessing timely emotional support. “It’s not about AI being superior to human therapists, it’s about having structured support available when you need it most. Our goal is providing enough emotional stability and self-awareness for people to build the relationships and support systems they need.”

The Renée founders’ perspective reflects a growing consensus: AI emotional support functions best as a bridge rather than a destination. For Razia, Renée didn’t replace human connection, it helped her develop the clarity and confidence to pursue the relationship she wanted.

This distinction matters because it addresses the biggest misconception about the “AI therapy” phenomenon. Critics worry that people are replacing professional help with chatbots. Proponents sometimes overclaim that AI can replicate human therapeutic relationships. The reality appears more nuanced: AI excels at providing structured reflection and emotional skill-building that many people lack access to through traditional channels.

How do we go from here?

The “AI therapy” trend forces us to confront uncomfortable questions about how we support each other emotionally. If millions of people are finding profound value in AI-assisted self-reflection, what does that say about the gaps in our human support systems?

For Razia, the answer is clear: “Renée didn’t replace my need for human connection, it literally helped me figure out how to ask for what I actually wanted. I’m not dependent on AI emotional support or anything. I’m in a relationship with someone I can talk through problems with. But time and again, having that structured space to think things through when I needed it? That was invaluable.”

As the technology evolves and more people experiment with AI emotional support, the key will be maintaining clarity about what these tools can and cannot provide. They’re not therapists, but they might be something new: always-available reflection partners that help people develop emotional skills and self-awareness.

The “AI therapy” buzzword may be imprecise, but the need it represents is real. In a world where traditional emotional support often feels inaccessible or insufficient, millions are discovering that artificial intelligence can provide something valuable: a patient, structured space to think through the complexities of being human.

If you’re experiencing a mental health crisis, please reach out for professional help. You can contact the 988 Suicide and Crisis Lifeline at 988, the Crisis Text Line by texting START to 741-741, or visit your local emergency room. AI tools are supplements to, not replacements for, professional mental health care.

For queries reach out to [email protected]

References:

- https://www.tandfonline.com/doi/full/10.1080/10447318.2024.2385001

- https://bhbusiness.com/2025/12/10/proliferation-of-ai-tools-brings-increased-adoption-skepticism-among-psychologists/

- https://www.sciencedirect.com/science/article/abs/pii/S0163834323000877

- https://pmc.ncbi.nlm.nih.gov/articles/PMC10232930/

- https://sentio.org/ai-research/ai-survey